Optical archiving is one of the few reliable long-term storage methods, but the workflow is fundamentally broken:

Result: a slow, error-prone workflow that requires constant attention.

I was archiving a large photo dataset using multiple optical drives (2×BD, 2×DVD). Existing tools only support single-drive workflows or mirrored duplication, requiring continuous manual intervention.

This system was built because no macOS tools supported independent multi-drive scheduling with verification.

Build a system that:

The core problem was not burning discs — it was coordinating hardware into a reliable system.

The solution treats each drive as an independent worker. It introduces a queue + policy layer to manage execution, rather than relying on linear, manual control.

Optical drives operate as independent, stateful devices with no shared control layer.

The system treats each drive as an isolated worker, rather than attempting to abstract them into a single unified device.

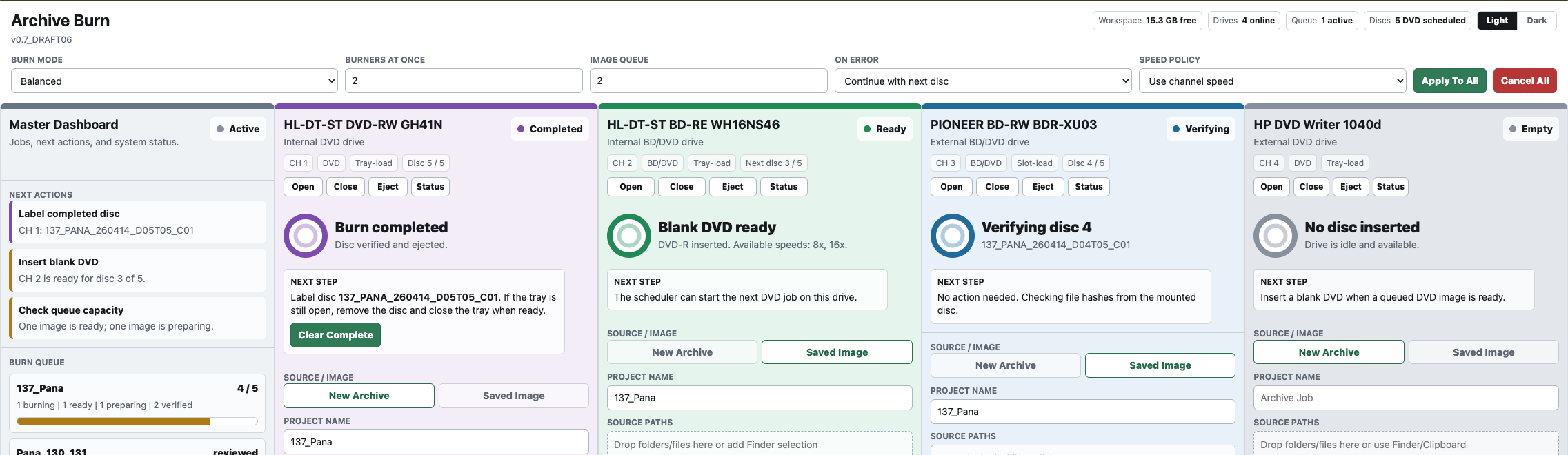

To coordinate multiple independent drives, a centralized queue system was introduced.

This replaces manual sequencing with deterministic execution.

The system responds to physical events rather than relying on linear execution.

This allows the system to operate asynchronously across multiple devices.

All burn operations are performed from prepared ISO images rather than raw source data.

This separates data integrity from hardware variability.

Hardware failures are treated as localized events.

This allows partial progress without full system interruption.

The system balances parallel execution with physical constraints.

This maintains consistent performance under real-world conditions.

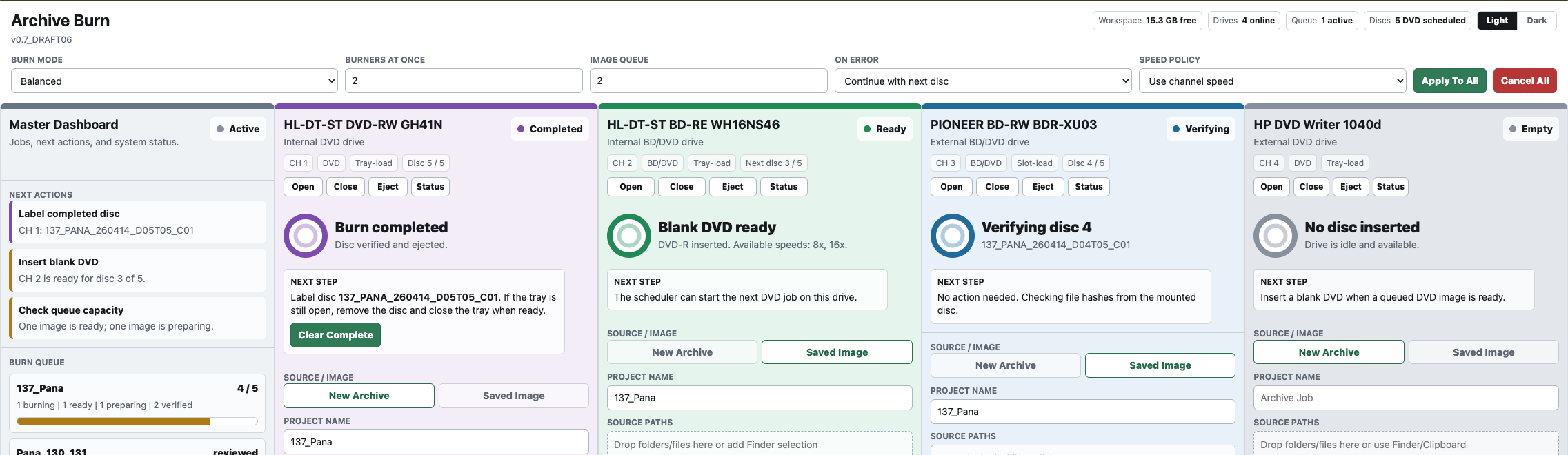

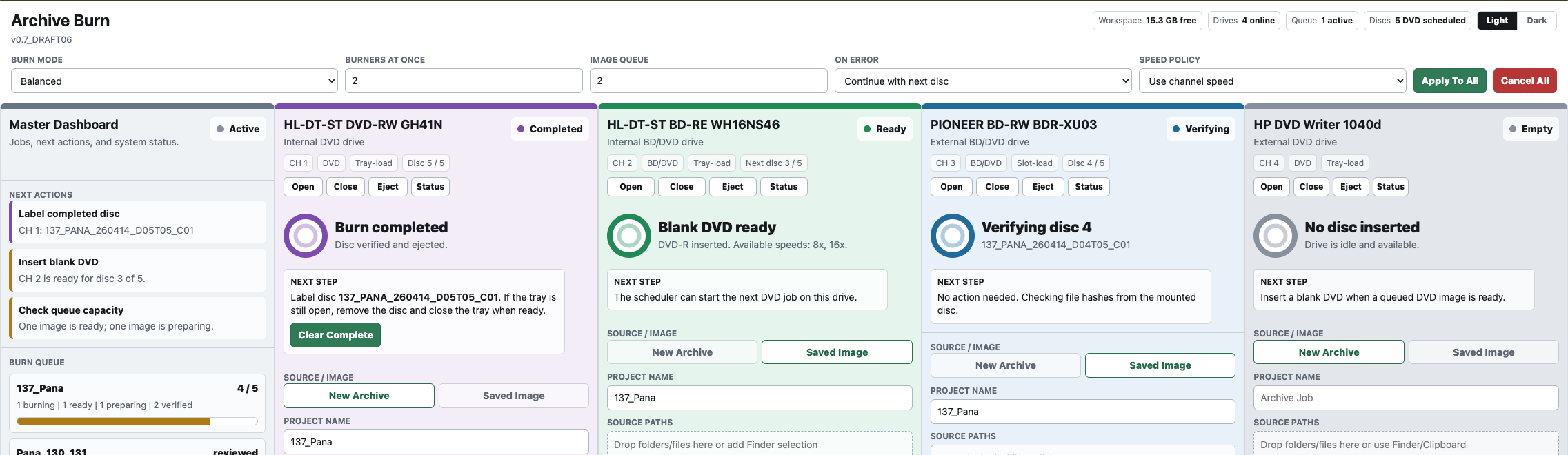

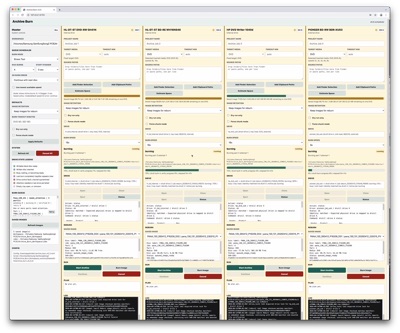

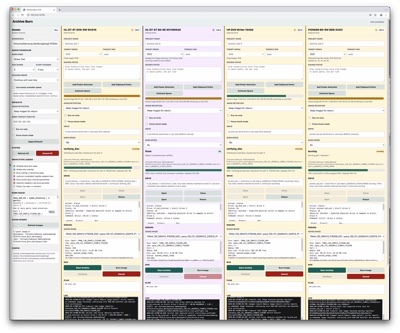

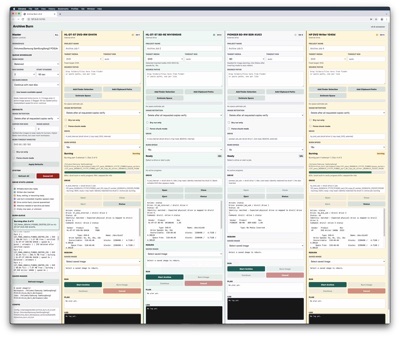

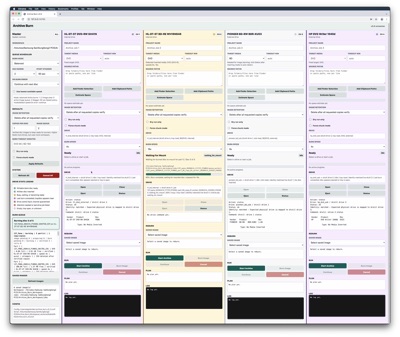

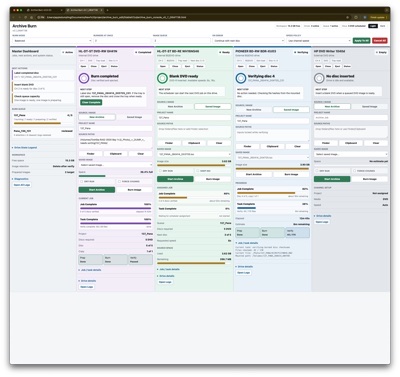

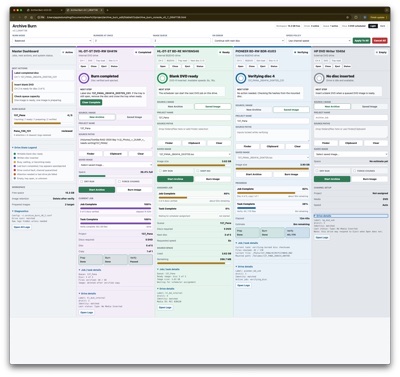

The interface was designed to support long-running, multi-device operations with minimal user intervention, focusing on clarity of system state rather than traditional control-heavy UI patterns. The immediate closest precedent was a studio audio mixer that controls several channel inputs at the same time with a master control that manages all of them.

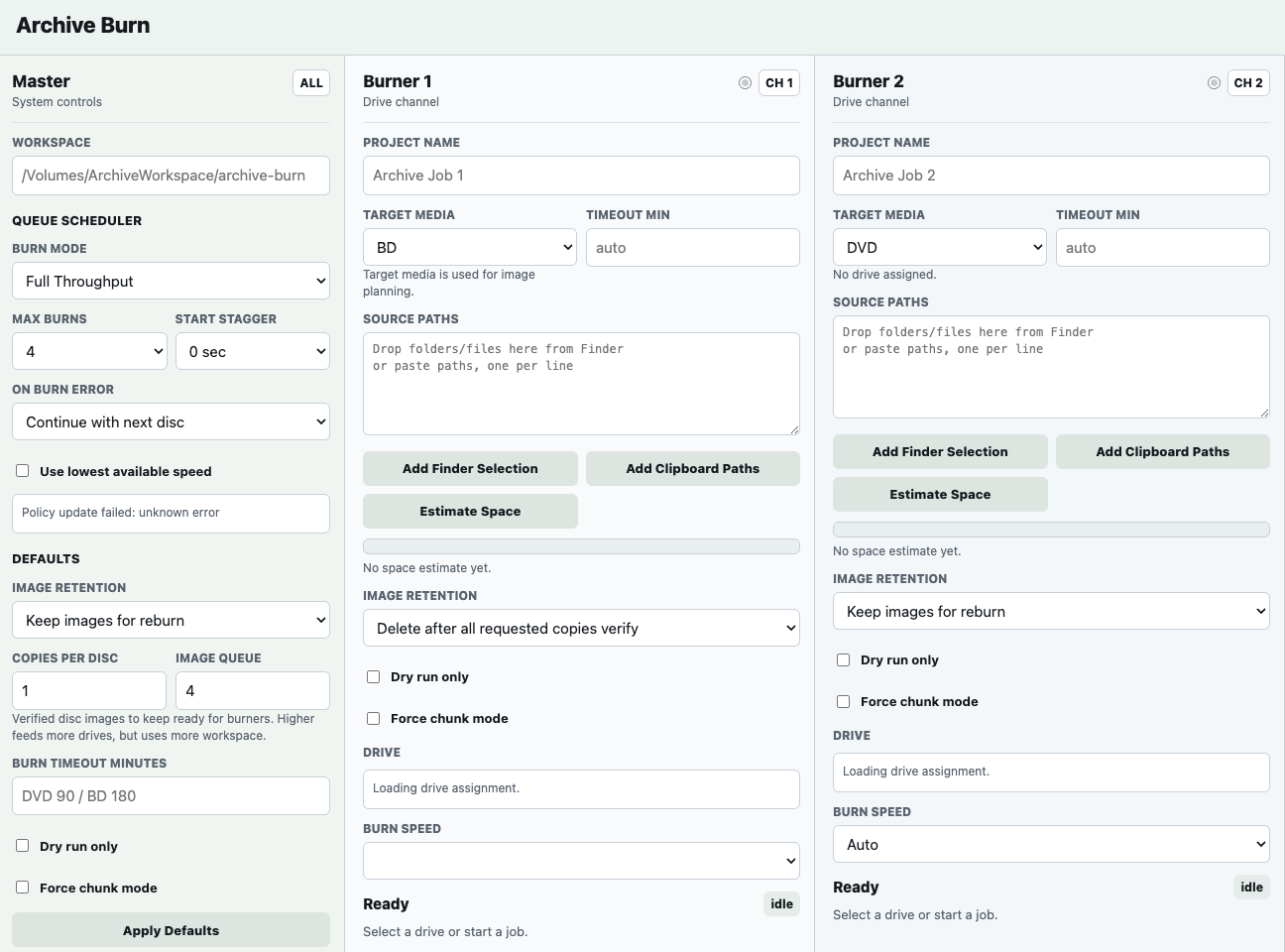

The UI is structured as a live dashboard, showing:

This allows the entire system to be understood at a glance without navigating between views.

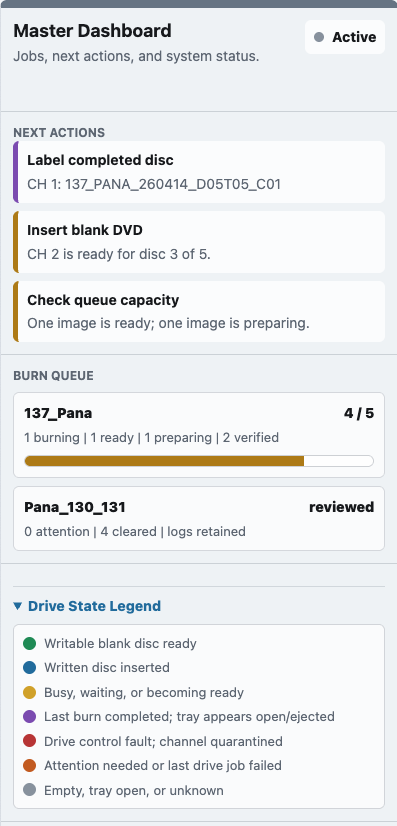

Each optical drive is represented as an independent “channel,” with its own:

This mirrors the underlying architecture, where each drive operates as an independent worker.

Visual states are used to communicate system behavior directly:

The interface prioritizes immediate recognition of system conditions over detailed inspection.

Each active channel presents a clear “Next Step,” indicating:

This reduces ambiguity and enables the system to run unattended for extended periods.

The UI minimizes required input by:

The operator primarily interacts with the system only when prompted.

Detailed logs and system diagnostics are accessible but not foregrounded, allowing:

This system fundamentally changed the feasibility of maintaining a large-scale optical archive.

Previously, archiving 20+ TB of photos and data required continuous manual supervision, limiting throughput and introducing risk through fatigue and inconsistency. Multi-drive setups provided no practical benefit due to the overhead of coordinating them manually.

By enabling independent, parallel use of multiple drives, the system increases effective throughput without increasing operator effort.

What was previously a linear, time-bound process becomes a scalable pipeline, allowing large datasets to be archived in parallel rather than sequentially.

Integrated verification and structured logging replace implicit trust in the burn process with explicit validation.

Each disc is produced as a verifiable artifact, with a traceable record of its contents and burn history, reducing the risk of silent failure within the archive.

The introduction of queue-based orchestration and action-based guidance removes the need for continuous attention.

The system can operate unattended for extended periods, with the operator only intervening when prompted, reducing fatigue and the likelihood of human error.

At larger scales, the limiting factor in optical archiving is not media capacity, but process overhead.

By removing coordination friction, this system makes optical media a practical long-term archive layer for large datasets, rather than a slow, manual fallback.

The project reframes optical archiving from a manual task into a managed system: